From Chatbots to Multi-Tool Systems: What Every Developer Should Know

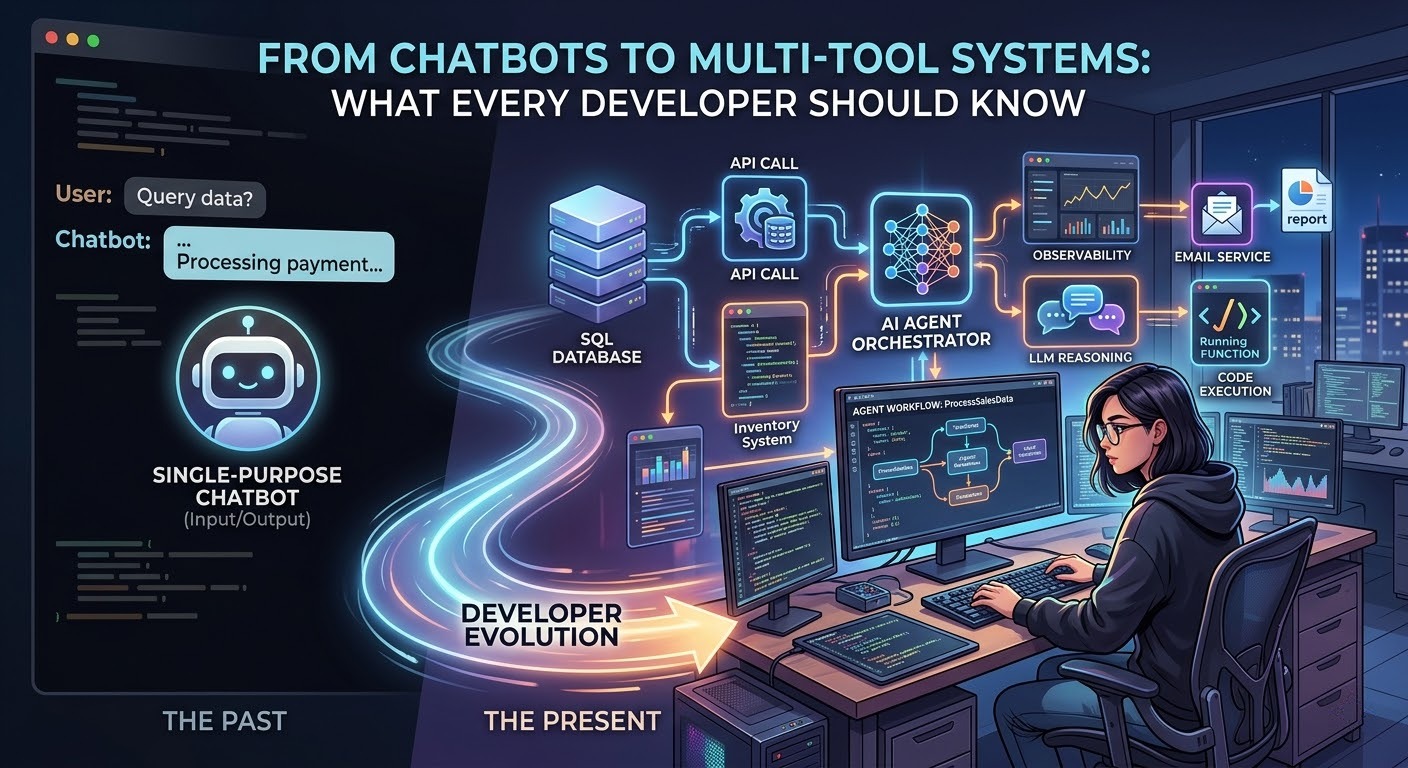

For the last couple of years, integrating AI into an application usually meant one thing: wrapping an API call to a Large Language Model (LLM) and returning a text response to the user. You send a prompt, you get a string back. It was revolutionary, but ultimately, standard LLMs are just highly advanced autocomplete engines confined to their chat windows.

The industry is now undergoing a massive paradigm shift from generative AI to agentic AI. We are moving from models that simply "talk" to systems that "do." For developers, this means shifting from string manipulation to orchestrating complex, autonomous software systems.

Here is what you need to know about building and scaling multi-tool AI systems.

1. The Core Difference: Workflows vs. Agents

When building AI that takes action, developers generally choose between two architectures:

-

Workflows (The Reliable Pipeline): You dictate the control flow. The code retrieves context, calls a specific tool, feeds that data to the LLM, and handles the output. Every step is explicit and predictable.

-

Agents (The Autonomous Loop): The LLM decides the control flow. You give the agent a goal and a toolbox (APIs, database access, search functions). The agent enters a recursive reasoning loop: it decides which tool to use, evaluates the result, and determines what to do next until the goal is met.

The Reality Check: Workflows are like a highly reliable script; agents are like hiring a brilliant but slightly chaotic intern. Workflows are cheaper and easier to debug. Agents are highly flexible but prone to infinite loops and unpredictable behavior.

2. Moving from "Generating" to "Executing"

To understand the architectural shift, consider a standard business application—for example, a custom Point of Sale (POS) system built in C#.

If you integrate a standard conversational chatbot into that POS, it acts as a smart user manual. A cashier might ask, "How do I process a split payment?" and the AI will output a well-formatted list of instructions.

A multi-tool agentic system, however, possesses perception and execution capabilities. If a manager types, "Analyze yesterday's top-selling items and email a restock report to the warehouse," the agentic system takes over:

-

Perception & Tool Use 1: It calls your SQL database tool to query the previous day's transaction logs.

-

Reasoning: It analyzes the JSON data returned from the database to identify the highest volume items.

-

Tool Use 2: It calls an internal inventory API to check current stock levels against the sales data.

-

Execution: It formats the findings and triggers your email service tool (e.g., SendGrid/SMTP) to dispatch the report.

The system isn't just generating text; it is orchestrating state changes across your backend infrastructure.

3. The Developer's Reality: New Bottlenecks

Building multi-tool systems introduces entirely new engineering challenges that don't exist in standard web development or basic LLM integration.

-

Infrastructure & Observability: Because decisions live inside dynamic reasoning loops rather than explicit code, debugging is a nightmare without the right tools. You will need dedicated observability platforms (like LangFuse or AgentOps) to trace exactly why an agent chose one tool over another.

-

Cost Overruns: A simple workflow might cost you $500/month for a set volume of interactions. A multi-agent system executing the same volume can easily cost 10x to 15x more because of the sheer number of recursive token calls required to reason through a problem.

-

The Need for Evals: You cannot rely on manual QA for an agent. If an agent goes rogue, it does so at machine speed. You need strict, automated evaluations (evals) running in the background to validate an agent's output against your business logic before it takes a final action.

4. Multi-Agent Systems (Microservices for AI)

Instead of building one massive "God Agent" with 50 tools, the enterprise standard is shifting toward multi-agent orchestration—essentially a microservices architecture for AI.

You break complex problems down and assign them to specialized agents. For instance, in a software development pipeline, you might have:

-

A Requirements Agent that breaks down user stories.

-

An Architecture Agent that selects the best design patterns.

-

A Coding Agent (perhaps utilizing a framework like React or specialized JS libraries) that writes the implementation.

These agents are coordinated by a central "Orchestrator" LLM that delegates tasks and ensures the specialized agents are working in harmony, reducing the cognitive load (and context window limits) on any single model.

5. Best Practices for Implementation

If you are looking to build multi-tool systems today, follow these guidelines:

-

Start with Workflows, Not Agents: If a process can be mapped deterministically (e.g., retrieving a user profile and summarizing it), build a hardcoded workflow. Do not use an autonomous agent for predictable tasks.

-

Use Agents for the "Messy" Middle: Reserve agentic loops for tasks where the inputs and decision trees are highly variable and unpredictable.

-

Human-in-the-Loop (HITL): Never give an agent unconstrained write access to a production database or the ability to send unvetted communications to clients. Always insert a checkpoint where a human must approve the agent's proposed action.

Efraim Ray

Efraim Ray